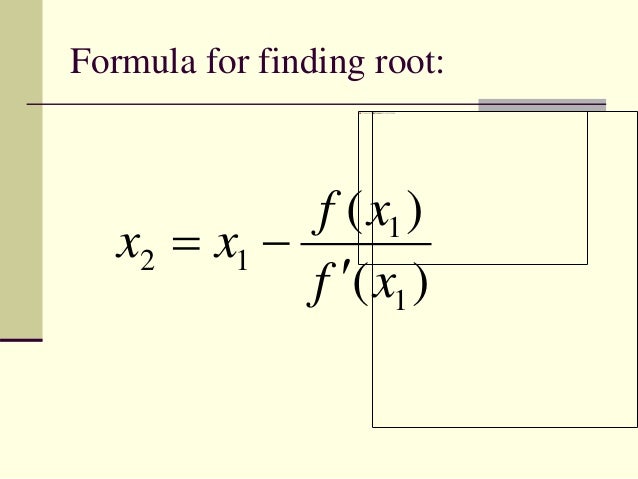

It is based on the geometry of a curve, using the tangent lines to a curve. Newton's method for solving equations is another numerical method for solving an equation $f(x) = 0$. John Von Neumann Finding a solution with geometry This is very handy when you converge to a degenerate critical point.Truth is much too complicated to allow anything but approximations. Backtracking GD allows big learning rates, can be of the size of inverse of the size of gradient, when the gradient is small.Backtracking GD can be implemented in DNN, with very good performance.First order methods have very well theoretical guarantee about convergence and avoidance of saddle points, see Backtracking GD and modifications.I have prepared SGD overview lecture focused on 2nd order methods: slides:, video: adding update of just 3 averages to momentum method we can simultaneously MSE fit parabola in its direction for smarter choice of step size. We can go from 1st order to 2nd order in small steps, e.g. It would be good to do it online - instead of performing a lot of computation in a single point, try to split it into many small steps to exploit local information about the landscape. repelling them instead? For example saddle-free Newton reverses negative curvature directions, but it requires controlling signs of eigenvalues, Newton's method directly attracts to close point with zero gradient. $$\mathbf$, what is costly and very unstable for noisy estimation - can be statistically blurred around $\lambda=0$ eigenvalues which invert to infinity, If you apply multivariate Newton method, you get the following. saddle points are common in machine learning, or in fact any multivariable optimization.Newton method attracts to saddle points.*Of course, Newton's method will not help you with L1 or other similar compressed sensing/sparsity promoting penalty functions, since they lack the required smoothness. Using Newton's method instead of gradient descent shifts the difficulty from the nonlinear optimization stage (where not much can be done to improve the situation) to the linear algebra stage (where we can attack it with the entire arsenal of numerical linear algebra preconditioning techniques).Īlso, the computation shifts from "many many cheap steps" to "a few costly steps", opening up more opportunities for parallelism at the sub-step (linear algebra) level.įor background information about these concepts, I recommend the book "Numerical Optimization" by Nocedal and Wright.

Ill conditioning slows the convergence of iterative linear solvers, but it also slows gradient descent equally or worse. You can compute Hessian matrix-vector products efficiently by solving two higher order adjoint equations of the same form as the adjoint equation that is already used to compute the gradient (e.g., the work of two backpropagation steps in neural network training). See, for example, the CG-Steihaug trust region method.

Using Newton's method does not require constructing the whole (dense) Hessian you can apply the inverse of the Hessian to a vector with iterative methods that only use matrix-vector products (e.g., Krylov methods like conjugate gradient). The paper referenced in 's answer points out problems with "Newton's method" in the presence of saddle points, but the fix they advocate is also a Newton method. A proper gradient descent implementation should be doing this too. Using linesearch with the Wolfe conditions or using or trust regions prevents convergence to saddle points. Moreover, the drawbacks that do matter also slow down gradient descent the same amount or more, but through less obvious mechanisms. The drawbacks in answers here (and even in the literature) are not an issue if you use Newton's method correctly. I say this as someone with a background in numerical optimization, who has dabbled in machine learning over the past couple of years. More people should be using Newton's method in machine learning*.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed